People all over the world come to TikTok to express themselves creatively and be entertained. To maintain a safe and welcoming environment for community members, TikTok works in being transparent about their actions along the way. Today, TikTok is releasing their fifth global Community Guidelines Enforcement Report as they continue to bring visibility to the critical work of moderating content and earn trust with their community.

People all over the world come to TikTok to express themselves creatively and be entertained. To maintain a safe and welcoming environment for community members, TikTok works in being transparent about their actions along the way. Today, TikTok is releasing their fifth global Community Guidelines Enforcement Report as they continue to bring visibility to the critical work of moderating content and earn trust with their community.

Since 2019, TikTok has been publishing its Transparency Reports, increasing the information they provide with each report. This includes adding insights related to the actions TikTok takes to protect the safety and integrity of the platform. This includes reporting the nature of content removed that violates TikTok policies and the number of violative ads rejected. The platform has heard from the community, civil society organizations, and policymakers that this information is helpful to understand how TikTok operates and moderates content. To share these insights more frequently, TikTok is now publishing quarterly Community Guidelines Enforcement Reports as part of their broader transparency reporting efforts. Because of the nature of processing and responding to legal requests, TikTok will continue to publish those data bi-annually.

Campaign is throwing an engagement party. Brand engagement, that is. Join us to find how to keep customers invested in your brand while scaling from bricks and mortar to digital and mobile channels at our free-to-attend webinar.

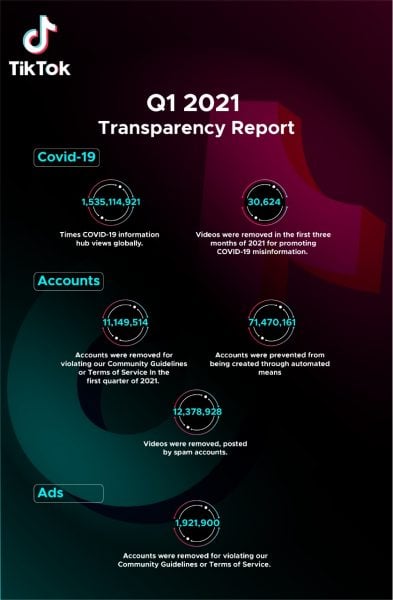

Here are some key insights from the Q1 2021 report, which can be read in full here.

- 61,951,327 videos were removed for violating TikTok Community Guidelines or Terms of Service, which is less than 1% of all videos uploaded on the platform.

- 82% of these videos were removed before they received any views, 91% before any user reports, and 93% within 24 hours of being posted.

- 1,921,900 ads were rejected for violating advertising policies and guidelines.

- 11,149,514 accounts were removed for violating TikTok Community Guidelines or Terms of Service, of which 7,263,952 were removed for potentially belonging to a person under the age of 13. This is less than 1% of all accounts on TikTok.

- 71,470,161 accounts were blocked from being created through automated means.

TikTok has continued to help the industry push forward when it comes to transparency and accountability around user safety. To bring more visibility to the actions TikTok takes to protect minors, in this report, the platform added the number of accounts removed for potentially belonging to an underage person. This builds upon previous work to strengthen default privacy settings for teens, offer tools to empower parents and families, and limit features like direct messaging and livestream to those age 16 and over. In order to continue strengthening TikTok’s approach to keeping the platform a place for people 13 and over, TikTok aims to explore new technologies to help with the industry-wide challenge of age assurance.

TikTok has continued to help the industry push forward when it comes to transparency and accountability around user safety. To bring more visibility to the actions TikTok takes to protect minors, in this report, the platform added the number of accounts removed for potentially belonging to an underage person. This builds upon previous work to strengthen default privacy settings for teens, offer tools to empower parents and families, and limit features like direct messaging and livestream to those age 16 and over. In order to continue strengthening TikTok’s approach to keeping the platform a place for people 13 and over, TikTok aims to explore new technologies to help with the industry-wide challenge of age assurance.

Farah Tukan, Head of Public Policy at TikTok MENA said: “Safety on TikTok has always been our number one priority, both globally and in the MENA region. Whilst most creators use the platform in a positive and creative way, there are some that unfortunately abuse our community guidelines. These acts are not tolerated and heavily acted upon, first by our advanced algorithm and then manually from our moderation team.

“The Q1 Transparency Report highlights and explains some of the key activities and initiatives we have accomplished from the beginning of the year to date to ensure that our user experience is safe. We are continuously working towards creating a harmonious and joyful environment for our community. Over the past quarter we’ve enhanced many safety features and collaborated with regional organisations to strengthen the entire online ecosystem, including UNICEF to counter bullying online. Ultimately, we will continue to offer a place for our creators to feel safe and enjoy consuming and sharing content.”

This report also includes TikTok’s first security overview on their global bug bounty program which helps proactively identify and resolve security vulnerabilities. This program strengthens the overall security maturity by encouraging global security researchers to identify and responsibly disclose bugs to TikTok teams so they can resolve them before attackers exploit them. As TikTok reported, in the first quarter of 2021, the platform received 33 valid submissions and resolved 29 of them. We also received and published 8 public disclosure requests. With regards to response efficiency, TikTok has an average first response time of 8 hours, a resolution time of 30 days, and it takes us 3 days on average to pay out a bounty.

In the future, TikTok will publish this data at the online transparency centre, which the platform is working to overhaul to become a home for all transparency reporting and other information about the efforts to protect the safety and integrity of our platform.